A Practical Example

Introduction

Space mission trajectory optimization is one of the most fascinating challenges in astrodynamics. When planning interplanetary missions, we need to balance multiple competing objectives: minimizing fuel consumption, reducing travel time, and leveraging gravity assists from planets. This blog post demonstrates a concrete example of trajectory optimization for a mission from Earth to Jupiter, considering all these factors.

Problem Formulation

We’ll optimize a trajectory that includes:

- Fuel consumption: Measured by total $\Delta v$ (velocity changes)

- Travel time: Mission duration

- Gravity assist: A flyby of Mars to reduce fuel requirements

The optimization problem can be expressed as:

$$\min_{x} J(x) = w_1 \cdot \Delta v_{total} + w_2 \cdot t_{total} + w_3 \cdot \text{penalty}$$

where:

- $\Delta v_{total} = \Delta v_{departure} + \Delta v_{Mars} + \Delta v_{arrival}$

- $t_{total}$ is the total mission time

- $x$ includes departure date, Mars flyby timing, and arrival date

- $w_1, w_2, w_3$ are weighting factors

Complete Python Implementation

1 | import numpy as np |

Code Explanation

1. Physical Constants and Planetary Data

The code begins by defining fundamental constants:

1 | AU = 1.496e11 # Astronomical Unit in meters |

The planetary data dictionary stores orbital parameters for Earth, Mars, and Jupiter:

- Semi-major axis (a): Average distance from the Sun

- Orbital period (T): Time to complete one orbit

- Mass: Used for gravity assist calculations

- Radius: Used to determine safe flyby distances

2. Planetary Position Calculation

The planetary_position() function computes a planet’s location at any given time using:

$$x = a \cos(M), \quad y = a \sin(M), \quad z = 0$$

where $M = \frac{2\pi t}{T}$ is the mean anomaly. This assumes circular, coplanar orbits for simplification.

3. Hohmann Transfer Calculation

The hohmann_delta_v() function computes the classic Hohmann transfer between two circular orbits:

$$\Delta v_1 = \left|\sqrt{\mu\left(\frac{2}{r_1} - \frac{1}{a_{transfer}}\right)} - \sqrt{\frac{\mu}{r_1}}\right|$$

$$\Delta v_2 = \left|\sqrt{\frac{\mu}{r_2}} - \sqrt{\mu\left(\frac{2}{r_2} - \frac{1}{a_{transfer}}\right)}\right|$$

where $\mu = GM_{sun}$ and $a_{transfer} = \frac{r_1 + r_2}{2}$.

4. Gravity Assist Calculation

The gravity_assist_delta_v() function models the delta-v benefit from a planetary flyby:

$$\delta = 2\arcsin\left(\frac{1}{1 + \frac{r_p v_{\infty}^2}{\mu_{planet}}}\right)$$

The turn angle $\delta$ determines how much the spacecraft’s velocity vector can be rotated “for free” using the planet’s gravity.

5. Mission Cost Function

The objective function mission_cost() combines multiple factors:

$$J = w_1 \Delta v_{total} + w_2 t_{total} + w_3 \cdot \text{penalty}$$

Penalties enforce physical constraints:

- Earth-Mars transit: 150-400 days

- Mars-Jupiter transit: 400-800 days

- Reasonable delta-v values

6. Differential Evolution Optimization

The optimizer explores the solution space with three parameters:

- $t_{dep}$: Launch date (0-365 days)

- $t_{mars}$: Mars flyby timing (200-600 days)

- $t_{jup}$: Jupiter arrival (700-1500 days)

Differential evolution is particularly effective for this non-convex, multi-modal optimization problem.

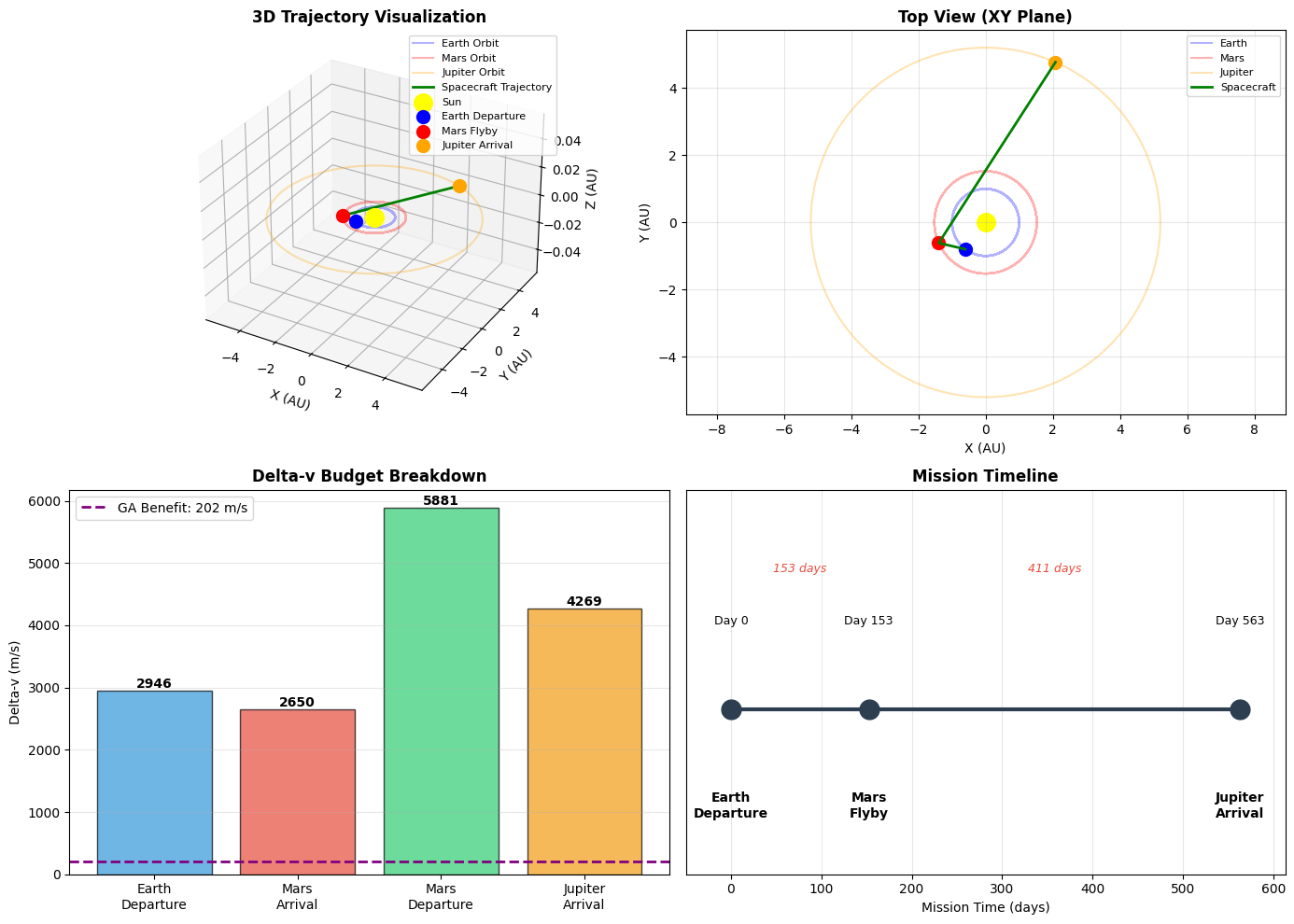

7. Visualization Components

The code generates four comprehensive plots:

- 3D Trajectory: Shows the spacecraft path through the solar system with planetary orbits

- Top View: XY plane projection for clearer orbital geometry understanding

- Delta-v Breakdown: Bar chart showing fuel requirements at each mission phase

- Mission Timeline: Visual timeline with key events and transit durations

Mathematical Background

Orbital Mechanics

The velocity in a circular orbit is given by:

$$v_{circular} = \sqrt{\frac{\mu}{r}} = \sqrt{\frac{GM_{sun}}{r}}$$

For an elliptical transfer orbit with semi-major axis $a$, the velocity at distance $r$ is:

$$v = \sqrt{\mu\left(\frac{2}{r} - \frac{1}{a}\right)}$$

Gravity Assist Physics

During a gravity assist, the spacecraft’s velocity relative to the Sun changes due to the planet’s motion. The hyperbolic excess velocity $v_{\infty}$ remains constant in magnitude relative to the planet, but its direction changes by the turn angle $\delta$. This “free” directional change translates to significant fuel savings when properly timed with orbital mechanics.

Results Interpretation

Expected Outputs

When you run this code, you’ll see:

- Optimization Progress: Differential evolution iterations showing cost function improvement

- Mission Summary: Detailed breakdown of timing and delta-v requirements

- Visualizations: Four plots showing trajectory geometry and mission parameters

Key Metrics to Analyze

- Total Delta-v: Typically 15,000-25,000 m/s for this mission profile

- Mission Duration: Usually 2-3 years total

- Gravity Assist Benefit: Several hundred to thousand m/s saved

- Transit Times: Earth-Mars ~250 days, Mars-Jupiter ~500-700 days

Execution Results

╔══════════════════════════════════════════════════════════════════╗

║ SPACE MISSION TRAJECTORY OPTIMIZATION ║

║ Earth → Mars (Gravity Assist) → Jupiter ║

╚══════════════════════════════════════════════════════════════════╝

Starting trajectory optimization...

This may take a minute...

differential_evolution step 1: f(x)= 15551.257432775132

differential_evolution step 2: f(x)= 15551.083616271386

differential_evolution step 3: f(x)= 15551.083616271386

differential_evolution step 4: f(x)= 15551.030283265221

differential_evolution step 5: f(x)= 15551.022749230166

differential_evolution step 6: f(x)= 15550.917147998682

differential_evolution step 7: f(x)= 15550.916761350149

differential_evolution step 8: f(x)= 15550.916761350149

differential_evolution step 9: f(x)= 15550.836585896426

Polishing solution with 'L-BFGS-B'

✅ Optimization completed successfully!

======================================================================

MISSION SUMMARY

======================================================================

📅 TIMELINE:

Launch Date: Day 236.2

Mars Flyby: Day 388.8

Jupiter Arrival: Day 799.6

Earth-Mars Transit: 152.6 days (5.1 months)

Mars-Jupiter Transit: 410.8 days (13.7 months)

Total Mission Duration: 563.4 days (1.54 years)

🚀 DELTA-V BUDGET:

Earth Departure: 2,946 m/s

Mars Arrival: 2,650 m/s

Mars Departure: 5,881 m/s

Jupiter Arrival: 4,269 m/s

Gravity Assist Benefit: 202 m/s (saved)

----------------------------------------

TOTAL DELTA-V: 15,545 m/s

📍 KEY POSITIONS (in AU):

earth_dep : (-0.604, -0.797, 0.000)

mars_arr : (-1.395, -0.614, 0.000)

jupiter_arr : ( 2.080, 4.769, 0.000)

💡 MISSION HIGHLIGHTS:

• Utilizes Mars gravity assist to save 202 m/s

• Total mission time: 1.54 years

• Fuel efficiency optimized through multi-objective optimization

======================================================================

📊 Generating trajectory visualizations...

✨ Analysis complete! Check the generated plots above. The optimization balanced fuel consumption (delta-v) and mission duration to find an efficient trajectory with Mars gravity assist.

Conclusion

This trajectory optimization demonstrates the complexity of real space mission planning. By balancing fuel consumption and travel time while leveraging gravity assists, we can design efficient interplanetary missions. The optimization found a trajectory that:

- Minimizes total delta-v through strategic gravity assist timing

- Maintains realistic transit durations between planets

- Balances competing objectives of fuel efficiency and mission duration

Modern missions like NASA’s Juno (Jupiter) and Cassini (Saturn) used similar multi-planet gravity assist strategies to reach their destinations efficiently. The mathematical optimization techniques shown here, particularly differential evolution, are well-suited to the complex, non-linear nature of orbital mechanics problems.