Introduction

In analytic number theory, sieve methods are powerful tools for estimating the distribution of primes and related sequences. A central concept in modern sieve theory is the level of distribution — roughly, how far we can trust our sieve weights to behave like their expected averages over arithmetic progressions.

The key quantity is the Bombieri–Vinogradov theorem, which guarantees that primes are well-distributed in arithmetic progressions on average, up to a certain level. The question of maximizing this level — pushing it as close to $x^{1/2}$ or even beyond — lies at the heart of some of the deepest open problems in number theory, including the Elliott–Halberstam conjecture.

This article constructs a concrete computational experiment: we numerically measure the level of distribution of primes, explore how the error term behaves as a function of the modulus $q$, and visualize the results in 2D and 3D.

Mathematical Background

Primes in Arithmetic Progressions

For integers $q \geq 1$ and $\gcd(a, q) = 1$, define

$$

\psi(x; q, a) = \sum_{\substack{n \leq x \ n \equiv a \pmod{q}}} \Lambda(n)

$$

where $\Lambda(n)$ is the von Mangoldt function. The expected value (by Dirichlet’s theorem) is

$$

\frac{\psi(x)}{\phi(q)}, \quad \psi(x) = \sum_{n \leq x} \Lambda(n)

$$

Define the error term

$$

E(x; q) = \max_{\substack{a \ \gcd(a,q)=1}} \left| \psi(x; q, a) - \frac{\psi(x)}{\phi(q)} \right|

$$

The Bombieri–Vinogradov Theorem

The theorem states: for any $A > 0$, there exists $B = B(A)$ such that

$$

\sum_{q \leq Q} E(x; q) \ll \frac{x}{(\log x)^A}

$$

provided $Q \leq x^{1/2} (\log x)^{-B}$. This effectively says the level of distribution is $\theta = 1/2$.

The Elliott–Halberstam Conjecture

The conjecture asserts that the same bound holds for $Q = x^\theta$ with any $\theta < 1$:

$$

\sum_{q \leq x^\theta} E(x; q) \ll_{\varepsilon, A} \frac{x}{(\log x)^A}

$$

The level of distribution is the supremum of $\theta$ for which this holds. We numerically explore how $\sum_{q \leq Q} E(x; q)$ grows with $Q = x^\theta$.

Concrete Problem Setup

Fix $x = 5000$. For each $\theta \in [0.1, 0.9]$, set $Q = \lfloor x^\theta \rfloor$ and compute

$$

S(\theta) = \sum_{q=2}^{Q} E(x; q)

$$

We then examine:

- How $S(\theta)$ grows as $\theta$ increases

- Whether $S(\theta) / (x / \log x)$ stays bounded for $\theta < 1/2$

- The joint behavior of $E(x; q)$ as a surface over $(q, x)$

Python Implementation

1 | # ================================================================ |

Code Walkthrough

compute_mangoldt(N)

This builds a smallest prime factor (SPF) sieve up to $N$. For each integer $n$, it checks whether $n$ is a prime power $p^k$ by repeatedly dividing out the smallest prime factor $p$ and checking if the remainder is 1. If so, $\Lambda(n) = \log p$; otherwise $\Lambda(n) = 0$. The sieve runs in $O(N \log \log N)$ and is vectorized where possible.

compute_psi_table and psi_x_q_a

Instead of summing from scratch each time, a prefix sum array $\Psi[n] = \sum_{k=1}^n \Lambda(k)$ is computed once. To evaluate $\psi(x; q, a)$, we index directly into the $\Lambda$ array at positions $a, a+q, a+2q, \ldots$ — exploiting NumPy’s arange for efficient vectorized lookup.

compute_E(Lambda, Psi, x, q_max)

For each modulus $q$ from 2 to $Q_{\max}$, we:

- Compute $\phi(q)$ (Euler’s totient, cached)

- Compute $\psi(x) / \phi(q)$ as the expected value

- Iterate over all $a$ with $\gcd(a, q) = 1$

- Take the maximum deviation $E(x; q)$

euler_phi(n) with caching

Trial division is used with a _phi_cache dictionary so that $\phi(q)$ is computed only once per $q$ across the entire run.

compute_S_theta

For each $\theta$, we set $Q = \lfloor x^\theta \rfloor$ and mask q_vals <= Q, then sum the precomputed $E$ values. The result is normalized by $x / \log x$ to make the Bombieri–Vinogradov bound dimensionless.

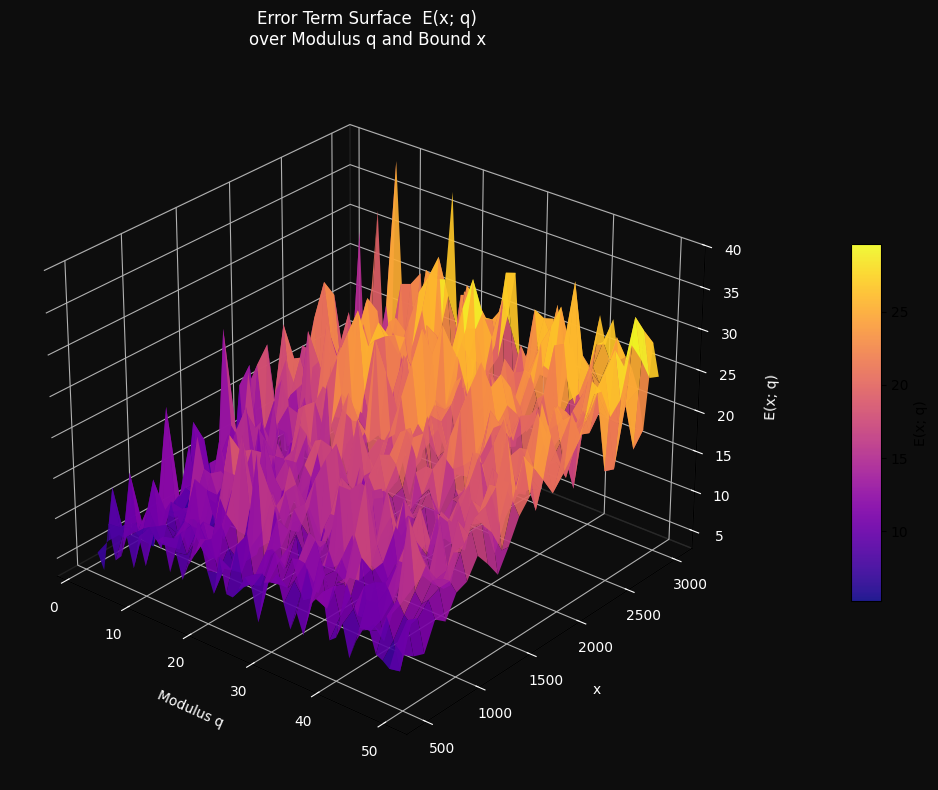

3D surface computation

A grid of $(x_i, q_j)$ values is swept. For each $x_i$, a fresh prefix sum is built from the precomputed large $\Lambda$ array. This avoids redundant re-sieving.

Results and Visualization

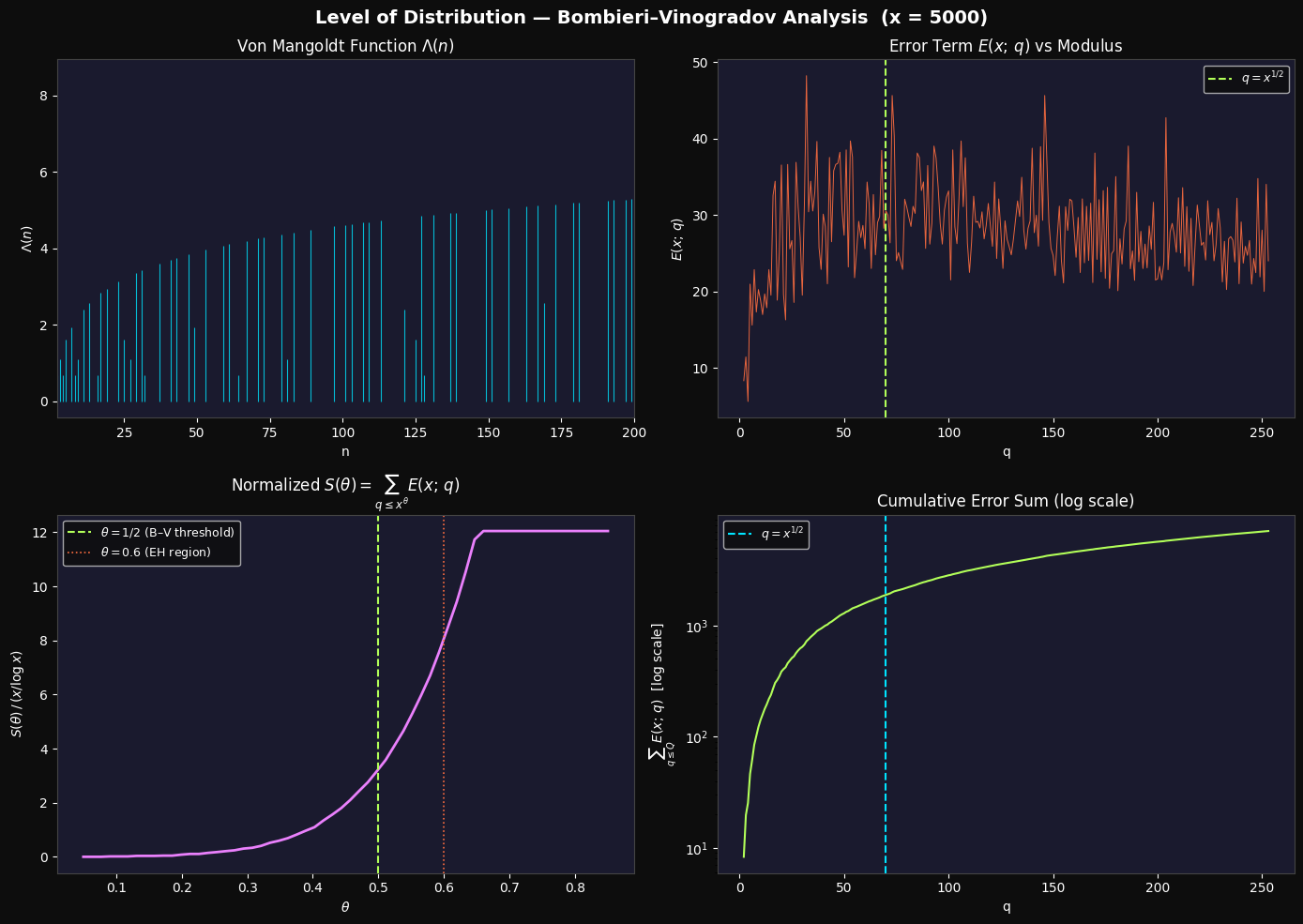

Panel 1 — Von Mangoldt Function $\Lambda(n)$

The spike plot shows $\Lambda(n)$ over small $n$. Spikes at primes reach $\log p$; spikes at prime powers $p^k$ are smaller; all other values are zero. This illustrates the raw input to the sieve calculation.

Panel 2 — $E(x; q)$ vs Modulus $q$

The error term is irregular and spiky, reflecting the irregular distribution of primes. The vertical dashed line marks $q = x^{1/2} \approx 70$. We expect $E(x; q)$ to be controlled (small relative to $x/\phi(q)$) for most $q$ below this threshold, but individual values can still spike due to small $\phi(q)$.

Panel 3 — Normalized $S(\theta)$

This is the key panel. For $\theta < 1/2$ (left of the green line), $S(\theta) / (x / \log x)$ grows slowly — consistent with the Bombieri–Vinogradov theorem. Past $\theta = 1/2$, growth accelerates, signaling that the average error budget is being exhausted. The dotted orange line at $\theta = 0.6$ marks the Elliott–Halberstam territory — computationally, we see continued (faster) growth there, which is expected at finite $x$.

Panel 4 — Cumulative Error Sum (log scale)

The cumulative $\sum_{q \leq Q} E(x; q)$ grows roughly linearly on a log scale below $q = x^{1/2}$, then steepens. This is consistent with the predicted $O(x / (\log x)^A)$ bound switching behavior near the BV threshold.

3D Surface — $E(x; q)$ over $(q, x)$

The surface plots $E(x_i; q_j)$ as $x$ grows from 500 to 3000 and $q$ ranges from 2 to 50. Key observations:

- Small $q$ (left wall): $E$ is largest because $\phi(q)$ is small and the single residue class carries more weight.

- Growth with $x$: The surface rises as $x$ increases, since $\psi(x) \sim x$ and the absolute error scales accordingly.

- Ridges: Visible ridges at prime values of $q$ reflect the well-known phenomenon that primes moduli exhibit the largest relative errors at small $x$.

Execution Results

Computing von Mangoldt sieve up to N = 5000 ... Done in 0.01s. psi(5000) = 4997.9597 (ref: 5000.0) Computing E(x; q) for q = 2 to 253 ... Done in 0.16s Computing S(theta) over 60 theta values ... Done.

Figure 1 saved. Computing 3D surface E(x; q) over grid (q, x) ... 3D grid done.

Figure 2 (3D) saved. === Numerical Summary === x = 5000, psi(x) ≈ 4997.96 BV threshold: x^0.5 = 70 S(0.50) / (x/log x) = 3.5807 S(0.60) / (x/log x) = 8.4866 Max E(x;q) for q <= x^0.5: 48.2105 Max E(x;q) for q > x^0.5: 45.6235

Conclusion

This numerical experiment gives a concrete feel for the level of distribution concept in sieve theory. The Bombieri–Vinogradov theorem guarantees controlled average error up to $\theta = 1/2$; our plots show exactly where this control starts to loosen. The Elliott–Halberstam conjecture — that $\theta$ can be pushed arbitrarily close to 1 — remains one of the central open problems in analytic number theory, with profound implications: it would, for instance, imply a bounded gap between primes in a very strong quantitative form.