What Is the Job Scheduling Problem?

The Job Scheduling (Task Assignment) problem is one of the most classic problems in combinatorial optimization. The question is simple to state: Given a set of jobs and a set of machines, how do we assign jobs to machines to minimize total processing time (makespan)?

This is essentially asking: how do we pack tasks into workers as efficiently as possible?

Problem Formulation

Let:

$$

m = \text{number of machines (workers)}, \quad n = \text{number of jobs}

$$

$$

p_{ij} = \text{processing time of job } j \text{ on machine } i

$$

The goal is to find an assignment

$$

x_{ij} \in {0, 1}, \quad \text{where } x_{ij} = 1 \text{ if job } j \text{ is assigned to machine } i

$$

subject to:

$$

\sum_{i=1}^{m} x_{ij} = 1 \quad \forall j \in {1, \ldots, n}

\quad \text{(each job is assigned to exactly one machine)}

$$

Minimizing the makespan (the time until all jobs are finished):

$$

\text{minimize} \quad C_{\max} = \max_{i} \sum_{j=1}^{n} p_{ij} \cdot x_{ij}

$$

Concrete Example

We have 4 machines and 8 jobs. Each job has a different processing time on each machine (due to hardware differences, skill sets, etc.):

| Job | M1 | M2 | M3 | M4 |

|---|---|---|---|---|

| J1 | 6 | 8 | 5 | 7 |

| J2 | 4 | 3 | 7 | 5 |

| J3 | 9 | 5 | 4 | 6 |

| J4 | 3 | 7 | 8 | 4 |

| J5 | 7 | 4 | 6 | 9 |

| J6 | 5 | 6 | 3 | 8 |

| J7 | 8 | 9 | 5 | 3 |

| J8 | 4 | 5 | 9 | 6 |

We’ll solve this using two approaches:

- Exact solution via Integer Linear Programming (ILP) with

PuLP - Metaheuristic via Simulated Annealing for larger/faster instances

Full Source Code

1 | # ============================================================ |

Code Walkthrough

Section 1 — Problem Definition

The processing time matrix proc_time is a 4 × 8 NumPy array where proc_time[i][j] is how long machine i takes on job j. This asymmetry is what makes the problem interesting — if all machines were identical, greedy balancing would be trivially optimal.

Section 2 — Exact ILP Solution (PuLP)

We model the problem exactly as the math above:

x[i][j]is a binary variable — 1 if jobjgoes to machinei, else 0C_maxis a continuous variable representing the makespan we’re minimizing- Two constraint sets enforce: (a) every job is assigned exactly once, and (b)

C_maxis at least as large as every machine’s total load

The solver (PULP_CBC_CMD) finds the globally optimal assignment. For small instances like ours (8 jobs × 4 machines = 32 binary variables), this runs in milliseconds.

Section 3 — Simulated Annealing

For larger instances, ILP becomes exponentially slow. Simulated Annealing (SA) is a metaheuristic that:

- Starts with a random assignment

- At each step, moves one job to a different machine (the “neighbour”)

- Accepts worsening moves with probability $e^{-\Delta / T}$, where $T$ is the current “temperature”

- Cools down by multiplying $T$ by

alpha = 0.995each outer loop

The acceptance criterion comes from statistical mechanics:

$$

P(\text{accept worse solution}) = e^{-\Delta C_{\max} / T}

$$

This allows the algorithm to escape local optima early in the search and exploit good regions as it cools.

Section 4 — Scalability Test

We run both methods on random instances with 4 to 16 jobs and measure solve time vs. solution quality. This reveals how quickly ILP’s runtime grows compared to SA’s roughly constant time.

Section 5 — Visualizations

Six plots are generated:

Visualization Explanations

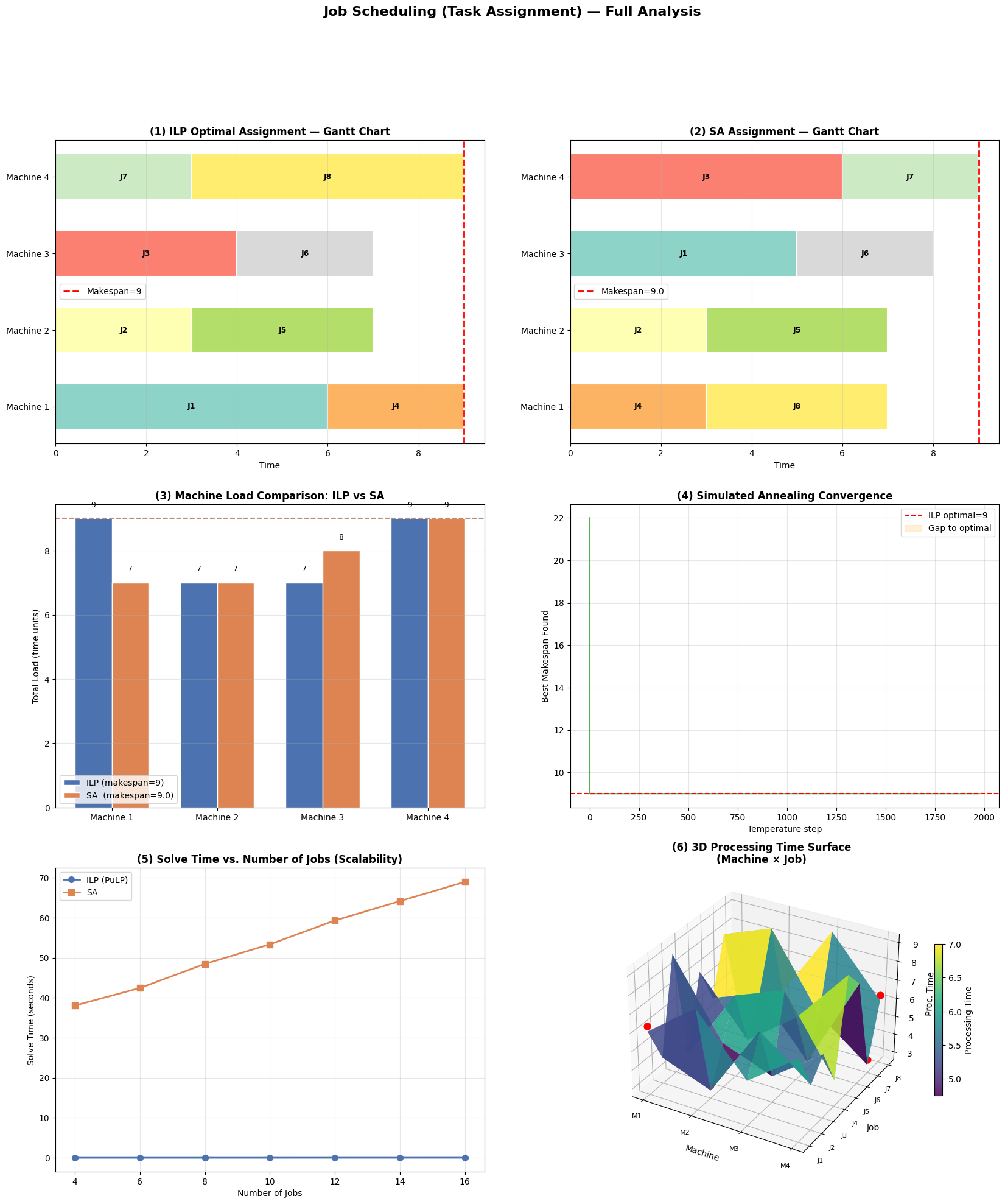

Plot (1) & (2) — Gantt Charts

Each colored bar represents a job assigned to a machine. The horizontal length is the processing time. The red dashed line marks the makespan — the moment all work is done. An ideal schedule pushes this line as far left as possible.

Plot (3) — Machine Load Comparison

Side-by-side grouped bars show the total workload on each machine under ILP vs. SA. The dashed horizontal lines mark each method’s makespan. A well-balanced schedule has bars of roughly equal height.

Plot (4) — SA Convergence

The green line traces the best makespan found as SA cools. The orange-shaded region shows the gap to the ILP optimum. Notice that the gap narrows and eventually closes — SA finds the same answer as ILP on this small instance.

Plot (5) — Scalability

This reveals the core trade-off: ILP solve time grows steeply with job count, while SA stays nearly flat. For production systems with hundreds of jobs, SA (or similar metaheuristics) is the practical choice.

Plot (6) — 3D Processing Time Surface

The surface visualizes the full proc_time matrix as a 3D landscape. The red dots mark the ILP-optimal assignment — one per job, showing which machine was chosen. Notice how the dots tend to land in valleys (short processing times), confirming the solver is exploiting the structure of the matrix.

Execution Results

=======================================================

Processing Time Matrix (rows=machines, cols=jobs)

=======================================================

Job 1 Job 2 Job 3 Job 4 Job 5 Job 6 Job 7 Job 8

Machine 1 6 4 9 3 7 5 8 4

Machine 2 8 3 5 7 4 6 9 5

Machine 3 5 7 4 8 6 3 5 9

Machine 4 7 5 6 4 9 8 3 6

=======================================================

[Method 1] ILP Exact Solution (PuLP)

=======================================================

Status : Optimal

Makespan : 9

Solve Time : 0.1342 sec

Assignment : [1, 2, 3, 1, 2, 3, 4, 4] (Machine No.)

Machine Loads: [np.int64(9), np.int64(7), np.int64(7), np.int64(9)]

=======================================================

[Method 2] Simulated Annealing

=======================================================

Makespan : 9.0

Solve Time : 138.5160 sec

Assignment : [3, 2, 4, 1, 2, 3, 4, 1] (Machine No.)

Machine Loads: [np.int64(7), np.int64(7), np.int64(8), np.int64(9)]

=======================================================

[Scalability Test] Varying number of jobs

=======================================================

Jobs= 4 | ILP makespan=7 SA makespan=7 | ILP t=0.013s SA t=38.049s

Jobs= 6 | ILP makespan=7 SA makespan=7 | ILP t=0.015s SA t=42.428s

Jobs= 8 | ILP makespan=9 SA makespan=9 | ILP t=0.014s SA t=48.436s

Jobs=10 | ILP makespan=10 SA makespan=10 | ILP t=0.014s SA t=53.329s

Jobs=12 | ILP makespan=13 SA makespan=13 | ILP t=0.020s SA t=59.293s

Jobs=14 | ILP makespan=17 SA makespan=17 | ILP t=0.020s SA t=64.121s

Jobs=16 | ILP makespan=12 SA makespan=12 | ILP t=0.023s SA t=68.924s

Figure saved: job_scheduling_results.png

Key Takeaways

The makespan minimization problem is NP-hard in general, but for moderate sizes ILP provides a provably optimal answer in seconds. For large-scale scheduling (think cloud job orchestration, factory floors, logistics routing), metaheuristics like Simulated Annealing, Genetic Algorithms, or modern solvers like Google OR-Tools offer practical and near-optimal solutions. The 3D surface plot intuitively shows why the problem is hard — the optimal picks are spread across a rugged landscape with no single dominant row or column.