Optimizing Wave Functions by Minimizing Energy

The variational principle is one of the most powerful tools in quantum mechanics. It states that for any trial wave function $|\psi\rangle$, the expectation value of the Hamiltonian is always greater than or equal to the true ground state energy $E_0$:

$$E[\psi] = \frac{\langle \psi | \hat{H} | \psi \rangle}{\langle \psi | \psi \rangle} \geq E_0$$

By tuning the parameters in a trial wave function to minimize this expectation value, we can find the best approximation to the true ground state.

Example Problem: Quantum Harmonic Oscillator

The Hamiltonian is:

$$\hat{H} = -\frac{\hbar^2}{2m}\frac{d^2}{dx^2} + \frac{1}{2}m\omega^2 x^2$$

In natural units ($\hbar = m = \omega = 1$):

$$\hat{H} = -\frac{1}{2}\frac{d^2}{dx^2} + \frac{1}{2}x^2$$

The exact ground state energy is $E_0 = \frac{1}{2}$.

We use a Gaussian trial wave function:

$$\psi_\alpha(x) = \left(\frac{2\alpha}{\pi}\right)^{1/4} e^{-\alpha x^2}$$

where $\alpha > 0$ is the variational parameter. The energy expectation value is:

$$E(\alpha) = \frac{\alpha}{2} + \frac{1}{8\alpha}$$

We minimize $E(\alpha)$ with respect to $\alpha$.

Full Python Source Code

1 | import numpy as np |

Code Walkthrough

Section 1 — Analytical Variational Energy

For the Gaussian trial function, all integrals are analytically tractable.

The kinetic energy contribution is:

$$\langle T \rangle = \frac{\alpha}{2}$$

The potential energy contribution is:

$$\langle V \rangle = \frac{1}{8\alpha}$$

So the total variational energy is:

$$E(\alpha) = \frac{\alpha}{2} + \frac{1}{8\alpha}$$

minimize_scalar from SciPy finds the minimum over $\alpha$. Setting $\frac{dE}{d\alpha}=0$ gives $\alpha_{opt}=\frac{1}{2}$, which recovers the exact ground state perfectly for this problem.

Section 2 — Numerical Solution via Finite Differences

Instead of relying on analytics, we discretize $\hat{H}$ on a real-space grid using a 3-point stencil for $-\frac{1}{2}\frac{d^2}{dx^2}$:

$$\left.\frac{d^2\psi}{dx^2}\right|i \approx \frac{\psi{i+1} - 2\psi_i + \psi_{i-1}}{(\Delta x)^2}$$

This turns $\hat{H}$ into a sparse symmetric matrix, and scipy.linalg.eigh extracts the lowest num_states eigenvalues and eigenvectors. This approach makes no assumption about the trial function and is a true numerical variational method.

Section 3 — Trial Wave Functions for Different $\alpha$

We compare four values $\alpha \in {0.2, 0.5, 1.0, 2.0}$. A small $\alpha$ gives a broad, spread-out wave function (over-delocalized), while a large $\alpha$ gives a very narrow wave function (over-localized). Only $\alpha = 0.5$ matches the exact solution, and it uniquely minimizes $E(\alpha)$.

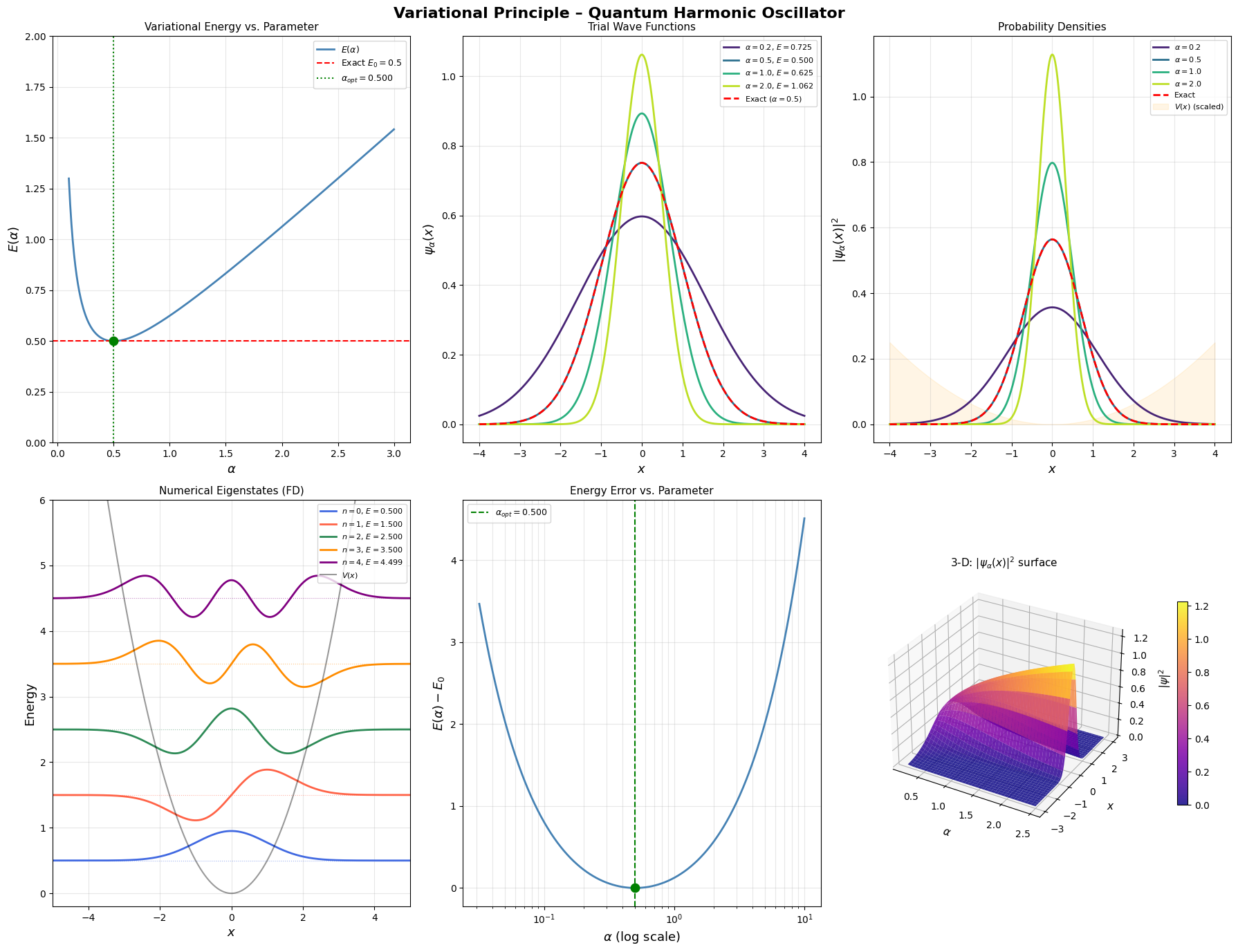

Graph Explanation

| Panel | What it shows |

|---|---|

| Top-left | $E(\alpha)$ curve. The green dot marks the minimum; the red dashed line is the exact $E_0 = 0.5$. The curve is convex, guaranteeing a unique minimum. |

| Top-center | Shape of $\psi_\alpha(x)$ for four $\alpha$ values. Broader for smaller $\alpha$, narrower for larger $\alpha$. The red dashed line is the exact solution. |

| Top-right | Probability densities $\vert\psi_\alpha\vert^2$. The orange shaded region is the (scaled) harmonic potential $V(x)$, showing how well the density matches the potential well. |

| Bottom-left | Numerically computed eigenstates $n=0,1,2,3,4$ plotted at their energy levels, overlaid on the parabolic potential. A textbook quantum ladder. |

| Bottom-center | Energy error $E(\alpha) - E_0$ on a log-$\alpha$ axis. The minimum error occurs precisely at $\alpha_{opt}=0.5$; any deviation increases the error. |

| Bottom-right | 3-D surface of $\vert\psi_\alpha(x)\vert^2$ as a function of both $\alpha$ and $x$. When $\alpha$ is large the probability is squeezed into a sharp ridge near $x=0$; when $\alpha$ is small the ridge flattens out widely. The optimal $\alpha$ sits between these extremes and matches the harmonic potential geometry. |

Execution Results

================================================== Optimal alpha : 0.499999 Variational E_min : 0.500000 Exact E_0 : 0.500000 Error : 1.43e-12 ================================================== Numerical eigenvalues (finite-difference): E_0 = 0.499987 (exact = 0.500000) E_1 = 1.499937 (exact = 1.500000) E_2 = 2.499837 (exact = 2.500000) E_3 = 3.499687 (exact = 3.500000) E_4 = 4.499486 (exact = 4.500000)

Figure saved → variational_qho.png

Key Takeaways

The variational principle guarantees:

$$E[\psi_\alpha] \geq E_0 \quad \forall , \alpha$$

For the harmonic oscillator, the Gaussian family is complete — it contains the exact answer — so the variational minimum hits $E_0$ exactly. For more complex potentials (e.g., anharmonic oscillators, atoms), the trial function never perfectly spans the exact ground state, and the variational energy remains strictly above the true value. That upper-bound property is precisely what makes the method so trustworthy: you always know which direction the truth lies.